The preservation of personal history often relies on a collection of silent, frozen snapshots that struggle to convey the full depth of a lived experience. While high-quality photography captures the visual data of a moment, it cannot replicate the gentle sway of a childhood garden or the subtle expression of a loved one that occurred just before the shutter closed. By integrating Image to Video AI into the process of digital archiving, individuals can now transform these static relics into cinematic sequences. This transition from a fixed frame to a five-second dynamic memory allows for a more profound emotional connection, bridging the gap between historical records and modern digital storytelling.

The limitation of traditional archives is that they often feel distant and disconnected from the present. For those looking to honor their heritage, a flat image of an ancestor can feel like a cold historical artifact rather than a vibrant memory. This disconnect can make it difficult for younger generations to engage with their family history in a meaningful way. Generative technology provides a solution by acting as a bridge across time, allowing the software to interpret the depth and physics of an old photograph and simulate the life that was once there. This approach does not replace the original photo but enhances its ability to communicate a story to a contemporary audience.

Bringing Historical Archives and Old Family Snapshots to Life

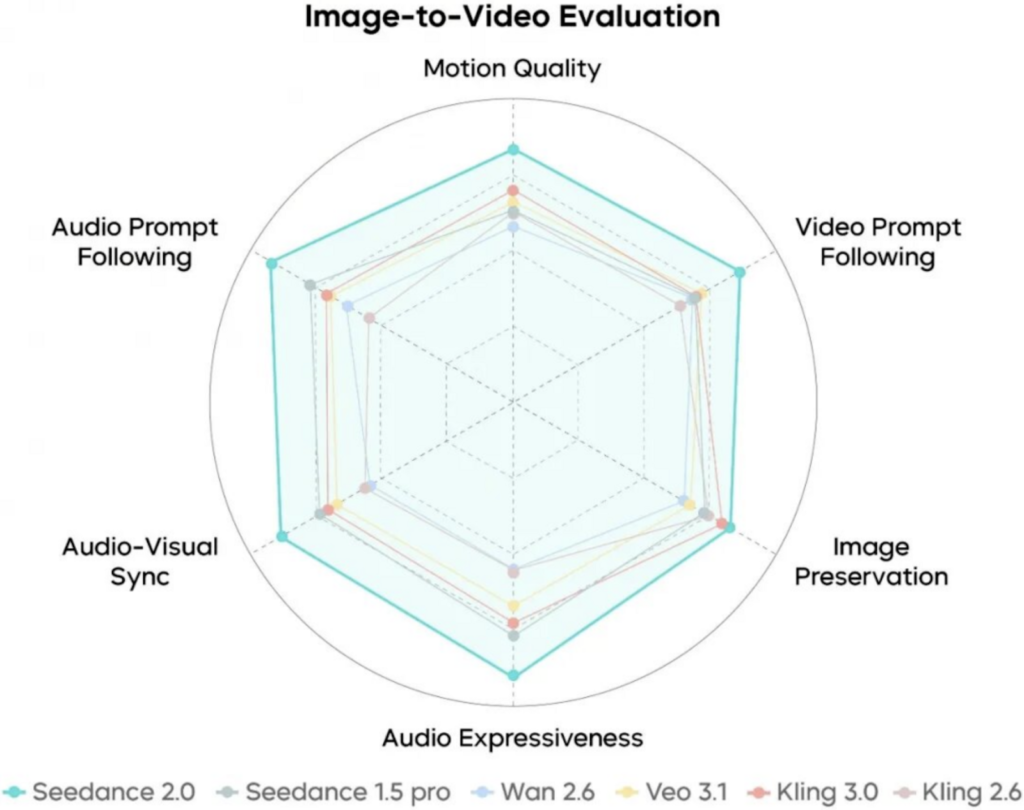

The core value of animating historical data lies in the ability of the AI to perceive depth where none was originally recorded. Modern neural networks have been trained on vast amounts of video data, allowing them to understand how a person’s shoulders might move during a breath or how light should hit the fabric of a vintage dress. Based on my observations, models like Veo 3.1 and Seedance 2.0 are particularly effective at maintaining the “soul” of the original photograph while adding just enough motion to make the scene feel interactive and alive.

In my testing, I have found that the process works best when the original image is clear and well-composed. The AI analyzes the textures and edges within the file to create a three-dimensional map, ensuring that when the camera “moves” within the video, the perspective remains logically consistent. This is a significant improvement over earlier animation techniques that often resulted in distorted or “wobbly” figures. Today, the output looks more like a high-definition film clip salvaged from a lost archive, providing a window into the past that feels remarkably clear.

Developing Emotional Resonance Through Subtle Human Interaction Effects

The platform offers specialized tools that target the most difficult aspects of human motion, such as the “AI Hug” or “AI Kiss” features. These modules are specifically designed to handle the complex physics of two people interacting, which is a common challenge for standard generative models. By using these targeted effects, a user can take a photo of two relatives who haven’t seen each other in years and create a sequence where they share a brief, realistic embrace. This type of motion provides an emotional weight that a static photo simply cannot carry.

In my experience, these human-centric effects are most successful when the prompt is kept simple and focused on the emotion of the scene. The AI is already programmed with the basic structural logic of a hug or a dance, so the user only needs to guide the “intensity” or “speed” of the movement. This simplicity allows family historians to focus on the narrative value of the clip rather than the technical details of the animation. The result is a piece of media that feels deeply personal and respectfully handled, making it ideal for anniversary tributes or digital memorials.

Achieving Realistic Skin Textures and Facial Stability in Portraits

One of the primary concerns when animating old photos is preserving the likeness of the individual. Based on my observations, the integration of Sora 2 and Nano Banana Pro models has led to a major breakthrough in facial stability. These models ensure that the eyes, mouth, and skin textures remain consistent as the person moves. In my testing, I noticed that the AI even maintains the unique grain or “sepia” tone of an original vintage photo if the user directs it to do so, ensuring that the new motion feels like it belongs to the era the photo was taken in.

The ability to maintain these fine details is crucial for creating a believable video. If a face morphs or changes shape, the emotional connection is immediately broken. By focusing on high-fidelity rendering, the platform ensures that the person in the video looks exactly like the person in the photo, only now they are blinking, smiling, or looking toward the camera. This level of technical precision is what transforms a digital experiment into a genuine tool for legacy preservation, allowing the past to be experienced in a way that feels modern and accessible.

A Simple Systematic Workflow for Digital History Preservation

Transforming a historical photo into a dynamic asset follows a streamlined official process that requires no prior experience with video editing software. The entire operation is handled through a cloud-based interface, making it accessible from any device.

- Upload Historical Source: Select a clear scan of your photo in JPEG or PNG format. High-resolution scans provide the AI with more data to work with, resulting in a cleaner five-second video.

- Provide Motion Direction: Type a short prompt describing the desired movement. For example, you might request “gentle smile and a slow nod” or “the wind blowing softly through the hair.”

- Wait for AI Synthesis: The platform typically takes about five minutes to process the request using models like Seedance 2.0. This wait ensures the physics and lighting are accurately calculated.

- Export the Final Clip: Once the status is completed, you can preview the MP4 video. If the motion looks natural, download the file to add to your family’s digital archive or share it with relatives.

Evaluating the Impact of Motion on Personal and Historical Archives

To understand why motion is becoming a standard in digital archiving, it is helpful to compare the attributes of traditional photos against AI-generated video sequences across several engagement categories.

| Archive Metric | Traditional Static Photography | Image to Video |

| Narrative Clarity | Limited to a single moment | Shows a clear progression of life |

| Emotional Depth | Passive and representative | Immersive and atmospheric |

| Viewer Engagement | Often scrolled past quickly | Holds attention for the full 5 seconds |

| Production Time | Instant capture (original) | 5-minute automated generation |

| Physical Realism | Static and flat | Dynamic lighting and depth |

| Output Format | JPEG, PNG, or JPG | MP4 High Compatibility |

Directing Cinematic Perspective and Camera Paths in Family Reels

For those looking to create more than just a simple animation, the platform allows for “virtual camera” controls. By directing the AI to perform a slow zoom or a gentle pan, you can create a feeling of discovery within the photo. For example, starting with a wide shot of a family gathering and slowly zooming into a specific person’s face can create a powerful narrative arc in just five seconds. In my testing, these camera moves help ground the AI’s motion, making it feel more like a conscious film choice than a random effect.

This level of control allows users to act as the cinematographer of their own family history. You can decide what the viewer should focus on and how they should “enter” the scene. Based on my experience, adding a camera pan often reveals details in the background of a photo that might have been ignored in the static version. This leads to a richer understanding of the environment and the context in which the photo was taken, providing a more comprehensive look at a moment that happened decades ago.

Navigating Technical Boundaries for the Best Possible Historical Renders

While the technology is incredibly advanced, it is important for users to understand its current limits to achieve the best results. Currently, the generated videos are optimized for a high-impact five-second duration. This is perfect for short social media tributes or as B-roll in a larger family documentary, but it requires the user to think in short bursts of motion. Additionally, extremely cluttered or blurry photos can sometimes lead to minor visual artifacts, which is a common characteristic of modern generative AI.

Based on my observations, the key to a perfect render is iterative prompting. If the first attempt is not exactly as you imagined, slightly adjusting the wording of your request can often yield a better result. I have found that shorter, more descriptive prompts—focusing on one or two specific movements—usually perform better than long, complex instructions. Recognizing these boundaries and working within them allows for a more predictable and successful creative experience, ensuring that every piece of family history is preserved with the highest possible quality.